Some colleagues have pointed out that Sun's implementation of Java SE 6 JRE includes a built-in Java HTTP Server. There is not much information regarding this online, but there are enough details to start using it for small HTTP service needs.

The main step a developer must perform to make use of the built-in HTTP server is to implement the HttpHandler interface. The Javadoc API description for com.sun.net.httpserver contains an example of implementing the HttpHandler and includes an example of how to apply TLS/SSL.

The code listing that follows is my own implementation of the HttpHandler interface and is adapted from the example provided in the Javadoc documentation for package com.sun.net.httpserver.

package dustin.examples.httpserver;

import com.sun.net.httpserver.HttpExchange;

import com.sun.net.httpserver.HttpHandler;

import java.io.IOException;

import java.io.OutputStream;

import java.net.HttpURLConnection;

/**

* Simple HTTP Server Handler example that demonstrates how easy it is to apply

* the Http Server built-in to Sun's Java SE 6 JVM.

*/

public class DustinHttpServerHandler implements HttpHandler

{

/**

* Implementation of only required method expected of an implementation of

* the HttpHandler interface.

*

* @param httpExchange Single-exchange HTTP request/response.

*/

public void handle(final HttpExchange httpExchange) throws IOException

{

final String response = buildResponse();

// UPDATE (01 April 2008): Thanks to Christian Ullenboom

// (http://www.blogger.com/profile/02403398196910607248)

// for pointing out the constant used below

// (see comments on this blog entry below).

httpExchange.sendResponseHeaders(HttpURLConnection.HTTP_OK, response.length());

final OutputStream os = httpExchange.getResponseBody();

os.write( response.getBytes() );

os.close();

}

/**

* Build a String to return to the web browser via an HTTP response.

*

* @return String for HTTP response.

*/

private String buildResponse()

{

final StringBuilder response = new StringBuilder();

response.append("<title>Sun's JVM HttpServer in Action</title>");

response.append("<h1 style=\"color: blue\">Hello, HttpServer!</h1>");

response.append("<p>This example shows that the Java SE 6 HttpServer ");

response.append("included with the Sun JVM is easy to use.</p>");

return response.toString();

}

}

The HttpHandler implementation shown above can be executed with a simple Java application as shown in the next code listing. Note that the port and the URL context that will be used to access the HttpServer are specified in this main executable code rather than in the more generic HttpHandler implementation above.

package dustin.examples.httpserver;

import com.sun.net.httpserver.HttpServer;

import java.io.IOException;

import java.net.InetSocketAddress;

/**

* Simple executable to start HttpServer for HTTP request/response interaction.

*/

public class Main

{

public static final int PORT = 8000;

public static final int BACKLOG = 0; // none

public static final String URL_CONTEXT = "/dustin";

/**

* Main executable to run Sun's built-in JVM HTTP server.

*

* @param args the command line arguments

*/

public static void main(String[] args) throws IOException

{

final HttpServer server

= HttpServer.create(new InetSocketAddress(PORT), BACKLOG);

server.createContext(URL_CONTEXT, new DustinHttpServerHandler());

server.setExecutor(null); // allow default executor to be created

server.start();

}

}

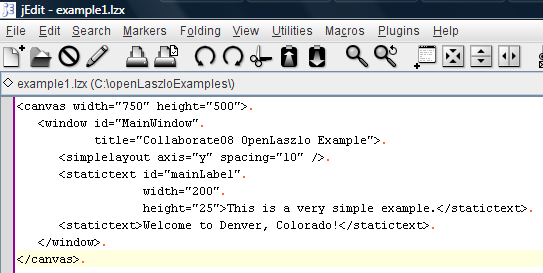

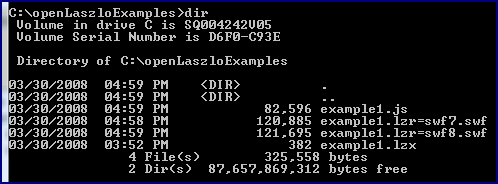

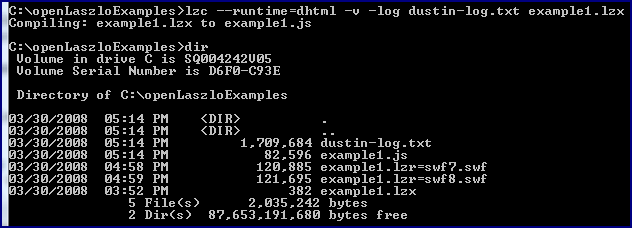

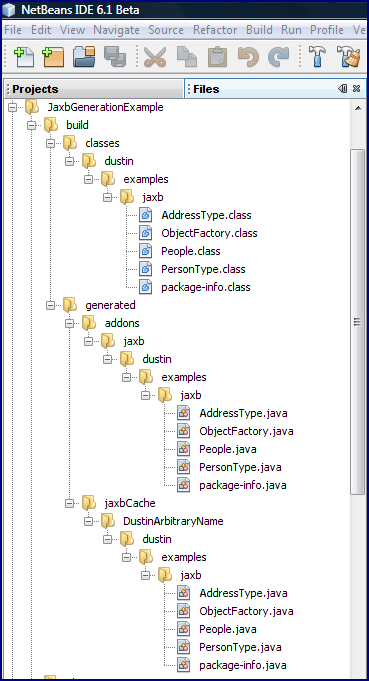

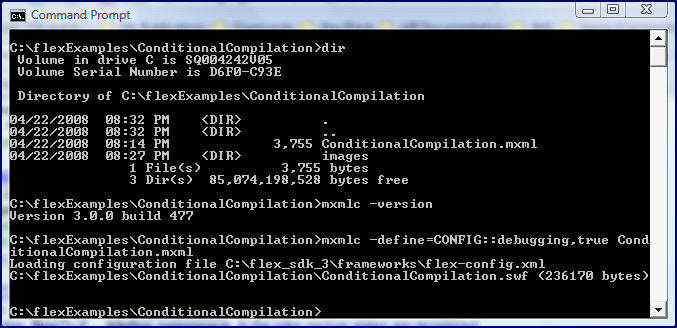

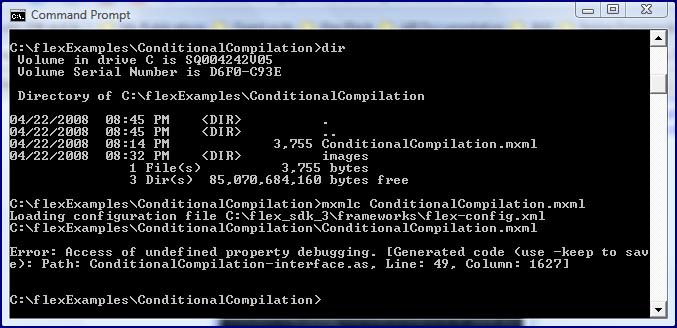

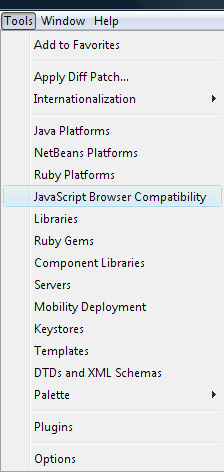

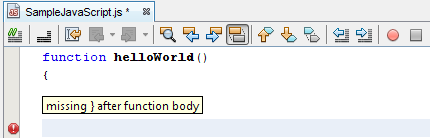

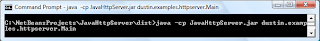

To compile these two classes, one simply performs normal compilation of the Java classes. To run the HTTP Server, one simply runs the main executable just shown. This is demonstrated in the following screen snapshot.

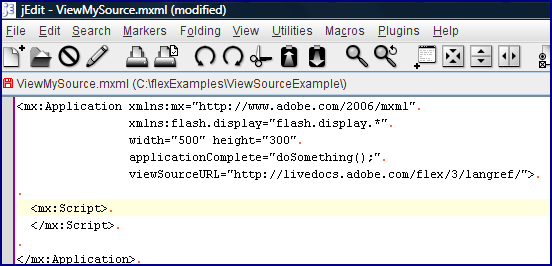

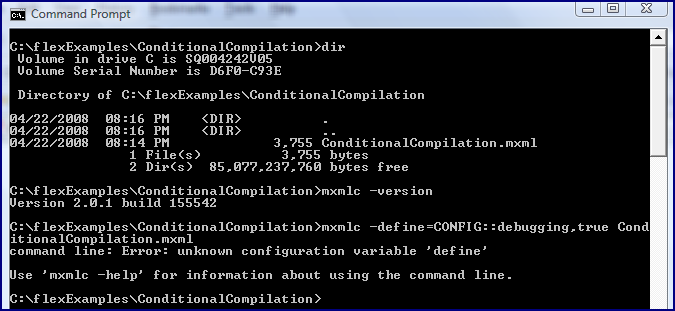

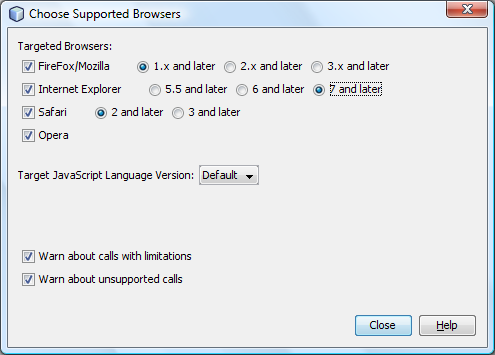

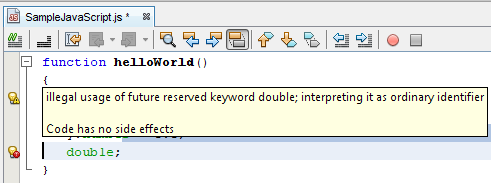

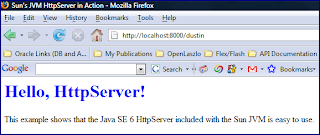

The HTTP Server runs until I instruct it to stop with a CTRL-C. While it is running, I can view the rendered web page by accessing a URL based on port specified in the main class (8000) and a web context specified in the main class ("/dustin"). A screen snapshot of the rendered page in a web browser is shown next.

There are several important observations to be made regarding use of the HTTP Server.

- The package

com.sun.net.httpservermakes it clear that this functionality is part of Sun's JRE implementation and is not standard across all JREs for Java SE 6. - It is not shown here, but the Spring framework provides a SimpleHttpInvokerServiceExporter to make use of the Java HTTP Server. Related classes include SimpleHttpServerFactoryBean and SimpleHttpInvokerRequestExecutor. The Spring 2.5, an Update presentation (see slide 16).

- The Java HTTP server is not a full-fledged HTTP Server.

- This blog entry refers to the Java built-in HTTP server as "Core HTTP Server" and references "mini servlets."

- See Bug ID 6270015 ("Support a Light-weight HTTP Server API").

As the code listings and snapshots above demonstrate, it is a straightforward process to implement a simple Java HTTP Server using the Sun-supplied built-in Java HTTP Server.

UPDATE (23 November 2008) Software Development: Easiest way to publish Java Web Services --how to talks about how to use this HTTP Server to publish web services.