I don't need to use java.util.Date much anymore these days, but recently chose to do so and was reminded of the pain of using the APIs associated with Java Date. In this post, I look at a couple of the somewhat surprising API expectations of the deprecated parameterized Date constructor that accepts six integers.

In 2016, Java developers are probably most likely to use Java 8's new Date/Time API if writing new code in Java SE 8 or are likely to use a third-party Java date/time library such as Joda-Time if using a version of Java prior to Java 8. I chose to use Date recently in a very simple Java-based tool that I wanted to be deliverable as a single Java source code file (easy to compile without a build tool) and to not depend on any libraries outside Java SE. The target deployment environment for this simple tool is Java SE 7, so the Java 8 Date/Time API was not an option.

One of the disadvantages of the Date constructor that accepts six integers is the differentiation between those six integers and ensuring that they're provided in the proper order. Even when the proper order is enforced, there are subtle surprises associated with specifying the month and year. Perhaps the easiest way to properly instantiate a Date object is either via SimpleDateFormat.parse(String) or via the not-deprecated Date(long) constructor accepting milliseconds since epoch zero.

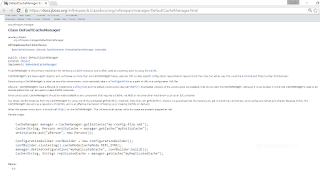

My first code listing demonstrates instantiation of a Date representing "26 September 2016" with 0 hours, 0 minutes, and 0 seconds. This code listing uses a String to instantiate the Date instance via use of SimpleDateFormat.parse(String).

final SimpleDateFormat formatter = new SimpleDateFormat(DEFAULT_FORMAT);

final Date controlDate = formatter.parse(CONTROL_DATE_TIME_STR);

printDate("Control Date/Time", controlDate);

When the above is run, the printed results are as expected and the output date matches the string provided and parsed for the instance of Date.

============================================================= = Control Date/Time -> Mon Sep 26 00:00:00 MDT 2016 =============================================================

It can be tempting to use the Date constructors that accept integers to represent different "fields" of a Date instance, but these present the previously mentioned nuances.

The next code listing shows a very naive approach to invoking the Date constructor which accepts six integers representing these fields in this order: year, month, date, hour, minutes, seconds.

// This will NOT be the intended Date of 26 September 2016

// with 0 hours, 0 minutes, and 0 seconds because both the

// "month" and "year" parameters are NOT appropriate.

final Date naiveDate = new Date(2016, 9, 26, 0, 0, 0);

printDate("new Date(2016, 9, 26, 0, 0, 0)", naiveDate);

The output from running the above code has neither the same month (October rather than September) nor the same year (not 2016) as the "control" case shown earlier.

============================================================= = new Date(2016, 9, 26, 0, 0, 0) -> Thu Oct 26 00:00:00 MDT 3916 =============================================================

The month was one later than we expected (October rather than September) because the month parameter is a zero-based parameter with January being represented by zero and September thus being represented by 8 instead of 9. One of the easiest ways to deal with the zero-based month and feature a more readable call to the Date constructor is to use the appropriate java.util.Calendar field for the month. The next example demonstrates doing this with Calendar.SEPTEMBER.

// This will NOT be the intended Date of 26 September 2016

// with 0 hours, 0 minutes, and 0 seconds because the

// "year" parameter is not correct.

final Date naiveDate = new Date(2016, Calendar.SEPTEMBER, 26, 0, 0, 0);

printDate("new Date(2016, Calendar.SEPTEMBER, 26, 0, 0, 0)", naiveDate);

The code snippet just listed fixes the month specification, but the year is still off as shown in the associated output that is shown next.

============================================================= = new Date(2016, Calendar.SEPTEMBER, 26, 0, 0, 0) -> Tue Sep 26 00:00:00 MDT 3916 =============================================================

The year is still 1900 years off (3916 instead of 2016). This is due to the decision to have the first integer parameter to the six-integer Date constructor be a year specified as the year less 1900. So, providing "2016" as that first argument specifying the year as 2016 + 1900 = 3916. So, to fix this, we need to instead provide 116 (2016-1900) as the first int parameter to the constructor. To make this more readable to the normal person who would find this surprising, I like to code it literally as 2016-1900 as shown in the next code listing.

final Date date = new Date(2016-1900, Calendar.SEPTEMBER, 26, 0, 0, 0);

printDate("new Date(2016-1900, Calendar.SEPTEMBER, 26, 0, 0, 0)", date);

With the zero-based month used and with the intended year being expressed as the current year less 1900, the Date is instantiated correctly as demonstrated in the next output listing.

============================================================= = new Date(2016-1900, Calendar.SEPTEMBER, 26, 0, 0, 0) -> Mon Sep 26 00:00:00 MDT 2016 =============================================================

The Javadoc documentation for Date does describe these nuances, but this is a reminder that it's often better to have clear, understandable APIs that don't need nuances described in comments. The Javadoc for the Date(int, int, int, int, int, int) constructor does advertise that the year needs 1900 subtracted from it and that the months are represented by integers from 0 through 11. It also describes why this six-integer constructor is deprecated: "As of JDK version 1.1, replaced by Calendar.set(year + 1900, month, date, hrs, min, sec) or GregorianCalendar(year + 1900, month, date, hrs, min, sec)."

The similar six-integer GregorianCalendar(int, int, int, int, int, int) constructor is not deprecated and, while it still expects a zero-based month parameter, it does not expect one to subtract the actual year by 1900 when proving the year parameter. When the month is specified using the appropriate Calendar month constant, this makes the API call far more readable when 2016 can be passed for the year and Calendar.SEPTEMBER can be passed for the month.

I use the Date class directly so rarely now that I forget its nuances and must re-learn them when the rare occasion presents itself for me to use Date again. So, I am leaving these observations regarding Date for my future self.

- If using Java 8+, use the Java 8 Date/Time API.

- If using a version of Java prior to Java 8, use Joda-Time or other improved Java library.

- If unable to use Java 8 or third-party library, use

Calendarinstead ofDateas much as possible and especially for instantiation. - If using

Dateanyway, instantiate theDateusing either theSimpleDateFormat.parse(String)approach or usingDate(long)to instantiate theDatebased on milliseconds since epoch zero. - If using the

Dateconstructors accepting multiple integers representing date/time components individually, use the appropriateCalendarmonth field to make API calls more readable and consider writing a simple builder to "wrap" the calls to the six-integer constructor.

We can learn a lot about what makes an API useful and easy to learn and what makes an API more difficult to learn from using other peoples' APIs. Hopefully these lessons learned will benefit us in writing our own APIs. The Date(int, int, int, int, int, int) constructor that was the focus of this post presents several issues that make for a less than optimal API. The multiple parameters of the same type make it easy to provide the parameters out of order and the "not natural" rules related to providing year and month make put extra burden on the client developer to read the Javadoc to understand these not-so-obvious rules.